|

Undergradute Research

My undergraduate research involved working with mobile milli-robots for autonomous navigation, mapping and learning of complex terrains as well as control using sensory feedback.

I worked under Prof. Ronald Fearing in the Biomimetic Milli-systems Lab part of Berkeley AI Research (BAIR)

|

|

Body Lift and Drag for a Legged Millirobot in Compliant Beam Environment

Cem Koc*,

Can Koc*,

Brian Su*,

In ICRA 2019

poster,

arxiv,

video

Much current study of legged locomotion has rightly focused on foot traction forces, including on granular media.

Future legged millirobots will need to go through terrain, such as brush or other vegetation, where the body contact

forces significantly affect locomotion. In this work, a (previously developed) low-cost 6-axis force/torque sensing

shell is used to measure the interaction forces between a hexapedal millirobot and a set of compliant beams, which

act as a surrogate for a densely cluttered environment. Experiments with a VelociRoACH robotic platform are used to

measure lift and drag forces on the tactile shell, where negative lift forces can increase traction, even while drag

forces increase. The drag energy and specific resistance required to pass through dense terrains can be measured.

Furthermore, some contact between the robot and the compliant beams can lower specific resistance of locomotion.

For small, light-weight legged robots in the beam environment, the body motion depends on both leg-ground and

body-beam forces. A shell-shape which reduces drag but increases negative lift, such as the half-ellipsoid used,

is suggested to be advantageous for robot locomotion in this type of environment.

|

|

Projects

Here you can find some of the projects I've worked on. I am very interested in developing projects which are at the intersection of Machine Learning,

Reinforcement Learning and Distributed/Parallel Computing

|

|

Parallelizing Chamfer Distance Computation for Point Cloud Similarity

slide,

report

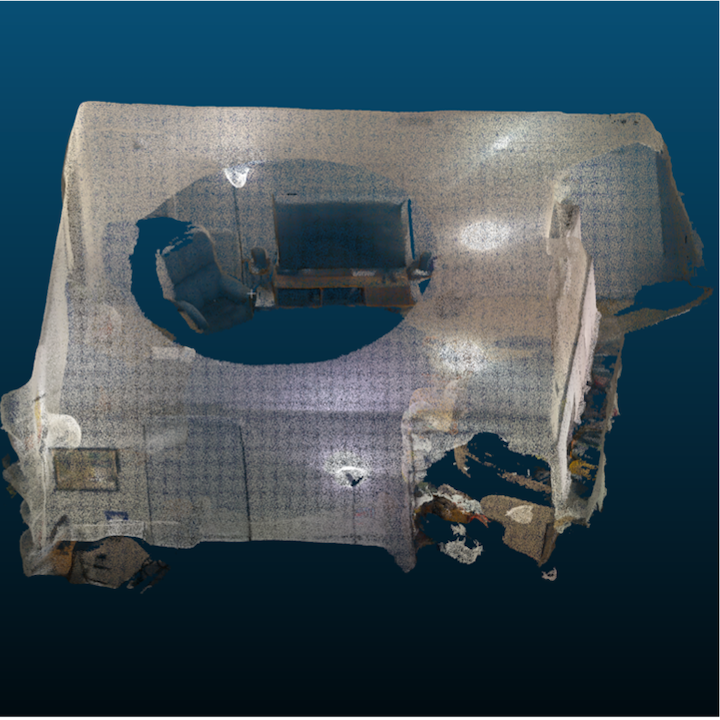

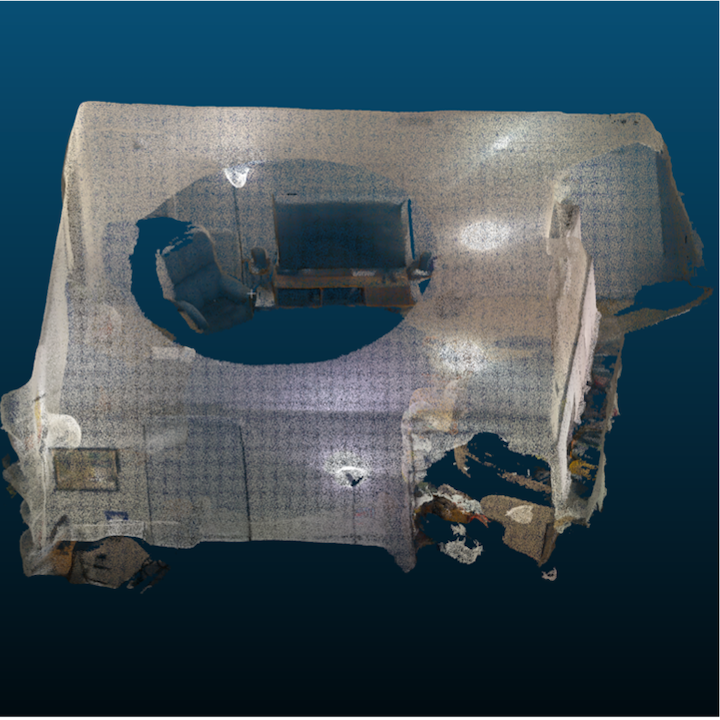

Given the increasing prevalence of point cloud data from LiDAR sensors, efficient processing and manipulation of

massive amounts of this data have become credibly important. Chamfer distance metric is an important similarity metric

when doing comparisons between two point clouds scans often of the same scene. We developed a way to make this

computation much faster by re-structing the problem as a parallel computing problem. We utilized both kdtree and octrees

to avoid the quadratic nature of the problem and implemented four different Chamfer distance algorithms including

two approximation based algorithms using OpenMP and CUDA to explore the potential for increased computational efficiency.

We compared it with the naive implementation and saw that our implementations on both OpenMP and CUDA significantly improved

the naive implementations boding well for consumer or industry applications.

|

|

Detecting Faces in Animes Using Supervised Domain Adaptation

Cem Koc*,

Can Koc*,

Brian Su*,

poster,

report

Wanted to try out domain adaptation and transfer learning.

Created an entirely new anime images dataset from frames and hand-labelled whether they contain a face or not.

Fine tuned GoogleNet, AlexNet, VGGFace models (pre-trained on ImageNet 2014) with this anime faces dataset and

confirmed that we can achieve ~97% accuracy.

|

|

Prioritized Experience Replay Kills on ViZDoom

poster

Implemented Prioritized Experience Replay from 2016 paper

from Schaul et al. to play on ViZDoom. Prioritized Experience replay

significantly reduced the training time on both dense and sparse reward games when compared

to the DQN paper.

This was trained on a single GPU using TF Keras.

|

|